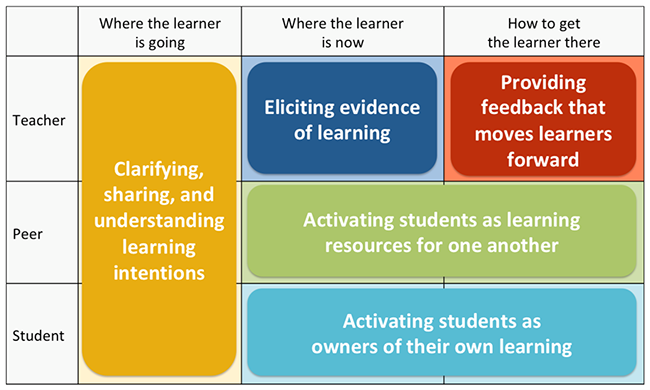

We're laying out practical remote techniques for a formative assessment framework's five instructional strategies. Catch up on our first installment, "Understanding Learning Intentions." Figure 1: 5 Instructional Strategies

Once you are clear about where you want students to go, you need to know where they are. Most people assume that when we remember things, the memory itself does not change. But in fact, whenever we retrieve knowledge from memory, that act makes the memory stronger. The harder the memory is to retrieve, the greater the strengthening effect.

So, when beginning a new topic, it is helpful to get students to write down—or in the case of younger students, to tell you—what they know about the topic. This activation of prior knowledge makes subsequent learning more memorable.

Eliciting Evidence of Learning

Whether in an online session or in face-to-face teaching, teachers frequently test the memory retrieval process with checks for understanding. However, when we think of checks as an assessment process, we have to think about the quality of evidence we have for instructional decisions in terms of depth and breadth.

Depth

Are we asking questions that give us insights into students' understanding, and are we sure that the right answers indicate the right thinking? Consider the following question:

There are two flights per day from Newtown to Oldtown. The first flight leaves Newtown each day at 9:20 a.m. and arrives in Oldtown at 10:55 a.m. The second flight from Newtown leaves at 2:15 p.m. Assuming both flights take the same amount of time, when does the second flight arrive in Oldtown?

It is tempting to assume that if students can provide the correct answer, then they understand how to do time calculations. But in this case, the required time calculation does not take the time past the hour, so a student who believes that there are 100 minutes in an hour will get the same answer as one who knows there are only 60 minutes in an hour. To be effective, we must design a question so that students with the right thinking and students with the wrong thinking don't produce the same response.

Breadth

For eliciting evidence in terms of breadth, it is essential to get information from all, or at least most, of the students in the group. If you are only getting information from the confident students, it's impossible to make instructional decisions that meet everyone's learning needs.

It isn't always about fancy tools. When remote teaching is synchronous, it may be preferable to use the "finger voting" method, where students hold up fingers in response to a multiple-choice question. After all, if we want to create a classroom culture where students feel happy about making mistakes and expressing opinions in a group context, we probably should not record every response in a spreadsheet—unless students can use the information to see advances in their own thinking. Collect-and-go approaches that feed forward to the teacher only can be confusing to remote learners who are in the process of mastering self-reflection. Without having access to the information themselves, learners have to rely on impressions from the instructor.

By spending time designing questions before online sessions begin, we can make better-informed judgments about whether our students are ready to move on. It can also be helpful in remote teaching to increase the element of construction and richness in assessment tasks (Scalise, 2011; Shute, 2009). Instructors can design valuable questions with formats that offer an interactive edge to support the student experience. For example, teachers in history or economics who are teaching schools of thought or different economic models can allow students to sort quotes on colored "tiles" from the sources being studied. Or, instructors might employ a drag-and-drop concept map or ask students to populate a timeline. In each of these cases, the technology sparks conversations, reflection, and collaboration.

For remote Mistake-Centered Action Exploration (MCAE), which we discussed in our first installment as an approach that inculcates a love of mistakes, try what we call the "Space Interval" assignment. Extending activation of prior knowledge combined with strategic competence, space interval brings back the opportunity to elicit evidence that students flagged previously while examining their mistakes. Software tools built to keep a tally of how successful the student is on each subsequent attempt, can choose any of the flagged examples for more practice. The likelihood of activating a prior mistake goes down as the mistake arises less often. This creates a type of strategic scaffolding. It also gives students the opportunity to draw on their adaptive reasoning over the range of what they have learned. Feedback That Moves Learners Forward

What kinds of feedback are most effective? Should feedback be immediate or delayed, specific or generic, verbal or written, or supportive or critical? Research over the last three decades has confirmed that the unsatisfactory answer to all of these questions is, "It depends" (Kluger & DeNisi, 1996).

For diffident learners, feedback may need to be supportive, but for more confident learners, it may be better to "cut to the chase" and tell students what they need to do to improve. Research has also shown that although students tend to express a preference for immediate feedback, delayed feedback can be more effective, perhaps because it challenges students to retrieve things from memory (Mullet, 2014).

Despite this mix of recipes for success, there are some reasonably universal guidelines that should work as well in online learning environments as they do in face-to-face environments.

The first guideline, and perhaps the most important, is that feedback's main purpose is to improve the student, not the work. Feedback that tells students exactly what to do, or worse, corrects their work, is unlikely to help better performance in the future. As in sports, the purpose of feedback is not to correct the last pitch or tackle but to improve future games. Yet, in many classrooms, feedback focuses on how to make the last piece of work better rather than the next one—or, in Douglas Reeves' memorable description, "the post-mortem rather than the medical" (2008). Second, if feedback is to help students improve, they must engage with it. For example, a student might be told that of the 20 subtractions they have completed for homework, five are wrong, and they must "find them and fix them." The idea here is that feedback should be in the form of an engaging, concrete task, thus minimizing the likelihood of an emotional reaction—sort of like detective work. If, as Robyn Jackson says, we should "never work harder than our students," then feedback should be more work for the recipient than for the giver. Relationships Make or Break Effective Feedback

How students react to feedback depends on the relationship between student and teacher. As the work of David Yeager and his colleagues has shown, when teachers stress that they have high standards and believe that the student can reach them, students are far more likely to engage with the feedback (2013).

There isn't a simple formula for effective feedback, but any teacher can improve by asking their students, "How did you use my feedback to grow?" If the students can't give a good answer to this question, then something needs to change.

In remote teaching for this formative strategy, on-screen markup as group feedback during synchronous sessions has been the focus of much interest. This is done as screen sharing, where students see online what the instructor is drawing, with a chat tool live for "back channels" or discussions among the participants. Live markup and chat as feedback may, in fact, increase engagement. The moving stylus and a simple visual, even if very primitive, help.

An easy reminder about on-screen markup is employing Dan Roam's "6 x 6" rule from his book The Back of the Napkin, which suggests that teachers learn six quick sketch types and associate them with six major questions: who/what, how much, where, when, how, and why (2008). In this approach, if we are responding to a "who/what" question, the "portrait" is often a quick head sketch or simple shape. For "how much," Roam recommends turning to the chart sketch; for "where," a map squiggle; for "when," a timeline; and for "how," a flowchart of cause and effect. "Why" can draw on any of these and may also be well-served with a multiple variable plot. With a handful of simple tools, teachers can provide live visual feedback in many contexts.

Applying the Mistake-Making Lens

To thread in mistake-centered exploration at the secondary level, we can offer feedback through the MCAE error log, or a multidimensional display tool that examines what we did and why. An error log for remote teaching in response to an interim assessment in a science or math classroom, for instance, uses green and red bars to indicate correct and mistaken responses (alongside written or vocal feedback).

During the assessment, the student flags which questions or tasks they struggled with or guessed on. Time information helps students understand how they are using their time. Taking much longer than most students but still deriving a strong solution may indicate a missing strategy that the student would like to learn. Taking much less time than other students with a high mistake rate may indicate the student needs support to engage. Below the error log, students classify mistakes and can then compare their feedback to their understanding of shared expectations.